If ever a Skype interview felt more appropriate than a face-to-face encounter, then it would be talking to Annie Dorsen – the New York theater director known for her work on algorithms, technologies and our ever more intimate relationships with machines. In her trilogy of plays “Hello Hi There,” “A Piece of Work” and “Yesterday Tomorrow,” chatbots and algorithms take center stage, generating live scripts and scores from sources as diverse as Shakespeare’s Hamlet and the Beatles’ “Yesterday.” The use of artificial intelligence in Dorsen’s practice draws out an ontological ambiguity that is already embedded in theater, thus revisiting some of the most fundamental questions that have preoccupied the genre since its origins. The distinction between reality and illusion, and the degree to which the viewer opts in or out of the spectacle, find a broader sociopolitical resonance at a time where the line between truth and fiction is increasingly fuzzy – a confusion reinforced by the very nature of new technologies.

The originality of Dorsen’s work has not gone unnoticed; this October she was granted a MacArthur Fellowship, a lucrative five-year award commonly known as a “Genius Grant.” Among her various projects for the coming years is a book on algorithmic theater that will combine theoretical writings and reflections on her own practice with the texts that have influenced her thinking. So, with a little help from technology, Dorsen appeared on my computer screen to tell us more about her work.

Where did your interest in algorithms come from in the first place?

It was very accidental in a way. I had an idea for a project that would use the Chomsky-Foucault debate from the 1970s as a starting point. In fact, I had this really terrible idea to set it to music and make a kind of opera! I went to a composer friend of mine, Joanna Bailie, to talk with her about whether she’d work with me on the project. Through the course of our conversation, she mentioned that Alan Turing had an interesting perspective on the relationship between language and consciousness that might be helpful to think about. The debate between Chomsky and Foucault, among many other things, is about whether language is an inside-out process or an outside-in process – whether what we say is a good translation of our original thought, or whether we are mostly reproducing language we’ve already heard.

So Joanna Bailie, who I later worked with on “Yesterday Tomorrow,” directed me towards Alan Turing’s essay from 1950, “On Computing Machinery and Intelligence.” It’s a foundational piece of writing in the history of natural language programming, and its thesis is that since we don’t know what thinking entails – how we do it or what it is – it’s incredibly difficult, and maybe impossible, for us to reproduce genuine thought in a machine. What we can perhaps do is reproduce the appearance or effect of thinking. This is where Turing came up with the notion of a language program that would create chat in a machine, and the famous “Imitation Game” where if you can program a machine cleverly enough, it could trick a human into thinking they were talking to another human rather than a machine, and you would have accomplished artificial intelligence. When I read this, my immediate thought was “this is theater”: it’s about illusions, it’s about creating an illusion for the audience, and it’s about the belief of the audience in what they are experiencing.

So, I started playing with chatbots on the internet – and this was 2009 so they were really stupid back then! The technology hadn’t changed significantly for 25 years or so; there were some attempts to try out different techniques over the years, but nothing had made the programs much better. That interested me also because, having learned a little bit about the history of natural language programming, there had been so much early optimism. The slow discovery that cracking the problem was going to be much more difficult than they expected is one of the interesting stories of early computer science. It reminded me very much of how the pre-1968 political optimism soured, turned into a kind of disappointed realization that the project of political liberation was also much more difficult, that it wasn’t going to be enough just to get the workers and the students together on the same side, and then comes the revolution. So, in this debate in 1971, underneath the conversation that Chomsky and Foucault are having, you sense that part of what they are really struggling with is the failure of the 1960s left-wing political movements.

Based on my understanding of this kind of rhyme between the disappointment of an early generation of computer scientists and the disappointment of left political thinkers, and my understanding that how chatbots work is somehow the same mechanism that makes theater work, I started to imagine creating a chatbot that would be able to “talk philosophy” and extend the conversation that Chomsky and Foucault had started. So that was the beginning of my interest in working with algorithms and theater. For that project I wasn’t thinking about algorithms, I was thinking about early AI. Later, I became more focused on the notion of an algorithmic procedure, because it seemed to me that the performer in a computer program is the algorithm. It’s the active agent, and there’s a real classic theater trope that “theater is action.” Everything else that theater may do – the atmosphere, the beauty of the language, the interest of the characters – none of that really matters if there’s no action. This is coming from Aristotle: that action is “the vital principle and very soul of drama.” I started thinking that if that’s true, then algorithms are theater artists – they’re performing. Following this thought, I made projects like “A Piece of Work” and “Yesterday Tomorrow” that were very much about creating a procedure, or a set of procedures, that would generate performance, and the human performers would just be along for the ride.

When a human performer becomes an algorithm, what does that flag up about the difference between the two?

The human is not the algorithm; the human performer is the interface. There are really three necessary elements to what we call an algorithm: one is the data set that the algorithm works on or with; the second is the algorithm itself, which is a set of instructions or steps to be followed; and the third is the interface, which is how the results get communicated outwards. So, in my pieces, the humans are in the position of the interface: they’re there to communicate the result of the algorithm to the audience. Maybe that’s not very different from what actors are doing in traditional performances anyway. They’re the visible, audible part that communicates the text or the score. Of course, that’s kind of a rude way of talking about it – one of the actors I worked with didn’t love it so much when I called him an interface! But it’s not a bad analogy, because performers always have some capacity to color or shape the expression of the algorithm, just as the information a computer program provides can be presented in many different ways, – the font or color of the text, the navigation design of the website, various kinds of data visualization, and so on. In that sense, the human performer is still very much a partner in the work, but with a precise role.

The primary relationship in my pieces is maybe not the relationship between the audience and the performer, but rather the relationship between the audience and the code. One of the things that I think people are trying to do when they watch the pieces is to understand what the computer code is doing – its mechanism. I don’t explain it at the top and I think one of the pleasures of the pieces – for those who find them pleasurable! – is to try and reverse-engineer in your head what you think the algorithm was asked to do.

In a way that is an extension of something that a lot of theater audiences will already be doing which is trying to figure out the behind-the-scenes workings. There’s always this duality of willingly suspending your disbelief and being swept up in the spectacle, but also simultaneously watching out for the slippages that reveal the artifice involved. So it seems there’s a similar principle, only extended and perhaps even more present?

Yes, this is part of the famous paradox of theater: that it’s simultaneously happening and not happening, that it’s real and not real. You could get up from your theater seat, walk up onto the stage and push one of the actors over, but on the other hand, there’s some kind of shimmering unreality of what goes on on-stage that creates a barrier. Time also works that way in performance; everything works that way. There’s a “thereness” and there’s a “not-thereness,” and that combination gives performance its special quality, a sort of ontological ambiguity that’s sometimes uncomfortable and sometimes very exciting.

My recent thinking has taken me back towards AI and this real and not-real aspect. Politically and culturally we’re living in a moment where there’s a lot of confusion around what is truth and what is fiction, what’s misinformation, what’s intentional disinformation, what’s a fake, what’s a copy, whether it’s a human or a bot behind that Twitter account. There’s a kind of epistemological crisis going on and I think that crisis is really inherent to the technology. It’s not an accident that this is happening, and it’s not that there’s just a few bad people doing some bad things with an otherwise wonderful set of tools; the tools themselves are perfectly suited to creating this kind of confusion. So I’ve come back to thinking about questions of representation in theater, very old questions in theater history about not being able to trust your eyes. It’s a classic question that goes all the way back to the Greeks, and is certainly central to many of Shakespeare’s plays. One of the things that theater is almost always about is whether you can believe what you are seeing in front of you. How do you know what is true and what is false?

Once you have a set of ideas or a sense of a new direction, how does that then transform into a concrete idea for a theater piece?

With “Hello Hi There,” I started with the source text, then Joanna pointed me in a good direction, so the research and the material that I wanted to work with turned out to be a good match and the project kind of flowed. With “A Piece of Work,” I had the idea of the algorithm I wanted to work with before I had the source material. I knew I wanted to do something with a Markov chain, which is a famous but very simple resequencing algorithm, and at one point I just decided I would do it with “Hamlet,” because it’s the ultimate play about what it means to be a human. For “Yesterday Tomorrow,” I was talking to a computer scientist friend of mine asking him if he would explain genetic algorithms, evolutionary algorithms, and as he was explaining it I said, “Oh I see, so you could use it to turn one thing into another, like you could turn the song ‘Yesterday’ into the song ‘Tomorrow.’” It was an offhand, spontaneous thought. So I just got lucky there!

When I first started thinking about these questions of machine learning and data representation, I was thinking a lot about Plato and the “Republic” and the problem that Plato proposes around questions of truth and copies and artistic work. It’s taken me about a year and a half, and I’ve just now started arriving at a project I might do, but I won’t say more because the form is not yet finished…

What do you think is the specificity of algorithmic theater? Is that something you’ve thought a lot about – how algorithmic theater relates to algorithmic literature or art?

Yes, I actually teach a class about exactly this! My algorithmic predecessors are all in other fields – you can trace it all the way back to Tristan Tzara making a Dada poem and doing the first cut-up. Of course, that technique gets used again and again throughout the 20th century. I also think John Cage is an algorithmic artist; he calls it chance operations, of course. And I understand why he put the emphasis on the chance part of it, but if you squint, you can see that really the creative work that he did was the construction of the algorithm, the process, the procedure. In music, after and alongside Cage, there’s an enormous amount of work that’s been made using algorithms. In poetry as well, Jackson Mac Low, or the Oulipo writers. Many others. In the visual arts, there’s a whole group of artists who took the name “The Algorists” – starting in the late ‘60s and ‘70s. Manfred Mohr, for example, Roman Verostko, Jean-Pierre Hébert, Vera Molnar…

One of the ways to think about what I’ve been doing is adapting some of those techniques from other fields to theater – which causes all kinds of interesting new problems. There’s an essay that Manfred Mohr wrote in 1971 in the catalog for what I think was the first solo exhibit of a computer artist in Paris. He writes about the process that he went through to start creating computer works. He describes teaching a machine how to make drawings similar to what he had previously been making by hand. This was very influential on me when I started thinking about “A Piece of Work.” I wondered what it would take to teach a computer how to make “Hamlet.” I realized quite quickly that it was basically an impossible task. The amount of cultural, historical, social and psychological knowledge that we bring with us to any theater project is so vast and so complex that you’d essentially have to create a kind of Leibnizian universal knowledge machine in order to have a computer genuinely createtheater.

But then we come back to the Turing proposal: that you don’t try to make a machine understand anything, you simply make a machine reproduce the effect of it. This is how the machine learning stuff works – on the statistical relationships between words in a sentence or a page, the probability that a certain word will follow another, or that this phrase will appear in context with these other phrases. That’s what we did with Hamlet in the end: we played some simple language games and made different slices through the text with very simple algorithms that re-arranged the words in the play in different ways.

Thinking about Turing’s question, or seeing your theater pieces, you inevitably end up reflecting on whether there’s something irreducible or unreproducible that sets a human apart from a machine.

Yes, there are a lot of possible hypotheses there. One important point is that a computer program has no intentionality behind it. Which is another way of talking about desire.

In traditional dramatic theater, a character doesn’t say anything on stage unless they want something. They’re trying to have an impact on the other people on stage or on the audience, in order to get what they want through persuasion or manipulation or whatever. Machines don’t have this desire. There’s nothing that a computer decides not to say; they have no thoughts that don’t get spoken, and that means there’s no subtext, which means there’s no self, no inner life. Another way of putting this question about interacting with the desire of the artist is that as a viewer, when you experience a work of art, you’re engaging with the mind of the artist. You’re looking past the surface expressions and asking: what does the whole thing do? What is the reason for being of this work of art? And that is a very complex mental function, putting it all together and drawing conclusions and interpretations from it, thinking about the relevance and the consequences. Humans are super good at that kind of thing, and computers are super bad at it!

I do think that it’s not simply our animal narcissism that brings us back to thinking about our own capacities when we’re confronted with computers doing human-ish things. You could say that it’s a narcissism, and there’s no doubt we humans are full of self-regard, but on the other hand, it’s one of the ways that we can start to approach the big unknowable questions that are always tantalizing: how does it come to be that I’m here and can feel things and see things and think things? The great ontological mysteries of the universe are pointed to by this difference between computer creativity and human creativity. By the failure of AI to be truly convincing, I mean. But this is changing quickly – as AI improves, the philosophical questions also change. There’s a new-ish text generating model called GPT2, and it’s really pretty good. The researchers on the development team said that they wouldn’t release the full thing because the copy it makes of human speech is too convincing, and therefore too dangerous. Maybe that’s just marketing hype, but maybe not.

Do you think you end up taking a positive or negative position in terms of how you see these new technologies and algorithms?

I don’t know if I have an overall view. I’m extremely concerned about surveillance and surveillance capitalism, the amount of responsibility that we’ve handed over to algorithms and how obviously full of bias they are, how obviously prone to all kinds of abuse. I’m concerned about facial recognition technology and I’m incredibly frustrated that one consequence of the paralysis of our political system is no one seems to be able to do anything about any of these things. We really need a complete overhaul of our legislative approach to technology, and to tech companies on the anti-trust side. So without a doubt, I have a very strong flashing red light about all of that. There are just way too many examples of people having their lives completely upended because an algorithm decided that their face looked like a bad person’s face. It’s totally crazy and on that level, I think we have big problems. I’m not a believer that technologies are necessarily inherently neutral. I think technologies suggest certain uses. Guns are designed to do a specific thing, they cannot be repurposed to do something other than fire bullets into living bodies. It’s the same with some of the machine learning technologies: they’re made to produce things that look convincingly like they were made by a human. Enough said, in a way. It’s obvious then that there’s a strong potential for abuse of trust, and further, a potentially really problematic collapse of the possibility for social cooperation. I follow Hannah Arendt on this: when you lose the shared basis for knowledge about the world, you lose the capacity to speak with- and be understood by- others, and then you lose everything. So I guess it’s fair to say that that’s more or less a negative view!

And despite that, one thing that comes up time and time again in reviews of your pieces is that they’re really funny. There’s a kind of joy about them.

The last thing that I would want in a work of art about this is something didactic and finger-wagging about the dangers. There are other forms of communication that are better suited to that. In terms of the artistic experience, what seems valuable to me is to spend time thinking about how we live with these technologies. The way we live with them is quite intimate and therefore sometimes funny, sometimes melancholy, sometimes lonely, and sometimes, surprisingly, digital information technology does live up to its promise to bring us together. So it’s all of those things, and I think those contradictions are really interesting, and also kind of moving and unsettling. It’s maybe already a complete cliché, but there’s the well-known quote by the sci-fi writer William Gibson: “The future is already here – it’s just not very evenly distributed.” I tend to think that’s right, and it also means that the dystopian, utopian and neutral aspects are all mixed up together. It’s not one thing. I don’t know that the #MeToo movement happens without Twitter, for example. Maybe it happens differently, but the particular kind of communication that these tools allow makes certain things possible, other things impossible. The technology does certain things well and certain things badly – it’s a jumble.

Article published on Blouin Artinfo.

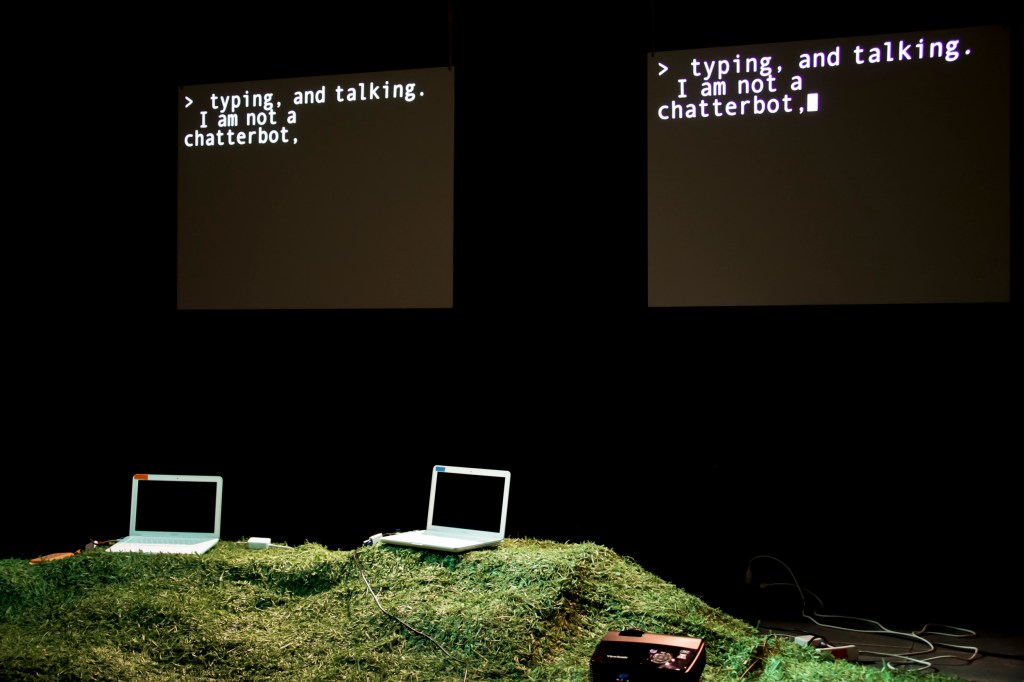

Featured image: Performance of Annie Dorsen’s “Hello Hi There.” Photo © W. Silveri.